Feed Aggregator

Empty transactions can be dangerous

Inserting an empty transaction can be dangerous. Another server might request that transaction from that server and then it gets an empty transaction with the same GTID as the original one. So this is only safe for slaves which will never become a master or if all possible slaves already have processed this gtid.

Taxonomy upgrade extras:

Replication Troubleshooting - Classic VS GTID

In previous posts, I was talking about how to set up MySQL replication, Classic Replication (based on binary logs information) and Transaction-based Replication (based on GTID). In this article I’ll summarize how to troubleshoot MySQL replication for the most common issues we might face with a simple comparison how can we get them solved in the different replication methods (Classic VS GTID).

There are two main operations we might need to do in a replication setup:

- Skip or ignore a statement that causes the replication to stop.

- Re-initialize a slave when the Replication is broke and could not be started anymore.

Skip or Ignore statement

Basically, the slave should be always synchronized with its master having the same copy of data, but for some reasons there might be inconsistency between both of them (unsafe statement in SBR, Slave is not read_only and was modified apart of replication queries, .. etc) which causes errors and stops the replication, e.g. if the master inserted a record which was …

Taxonomy upgrade extras: gtid, replication,

MySQL 5.5 and 5.6 ?!

Just to confirm, the above channel failover steps are valid in Galera Cluster for both MySQL versions 5.5 and 5.6. Enjoy!!

Taxonomy upgrade extras:

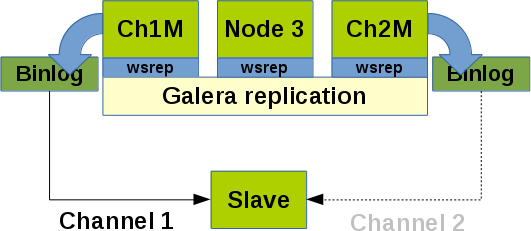

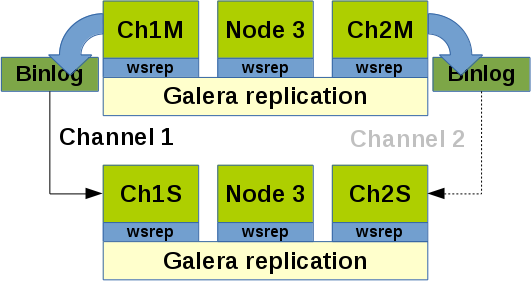

Replication channel failover with Galera Cluster for MySQL

Sometimes it could be desirable to replicate from a Galera Cluster to a single MySQL slave or to an other Galera Cluster. Reasons for this measure could be:

- An unstable network between two Galera Cluster locations.

- A separation of a reporting slave and the Galera Cluster so that heavy reports on the slave do not affect the Galera Cluster performance.

- Mixing different sources in a slave or a Galera Cluster (fan-in replication).

This article is based on earlier research work (see MySQL Cluster - Cluster circular replication with 2 replication channels) and uses the old MySQL replication style (without MySQL GTID).

Preconditions

- Enable the binary logs on 2 nodes of a Galera Cluster (we call them channel masters) with the

log_binvariable. - Set

log_slave_updates = 1on ALL Galera nodes. - It is recommended to have small binary logs and relay logs in such a situation to reduce overhead of scanning the files (

max_binlog_size = 100M).

Scenarios

Let us assume that for some reason the actual channel master of …

Taxonomy upgrade extras: channel, galera, cluster, failover, replication, master, slave,

When active, FromDUal casuses zabbix agent to bug

I installed FROMDUAL on a mysql server and when i active it causes zabbix agent to switch between unreachable and reachable every 5 minutes. When i disable FROMDUAL it starts to work fine. I also lose data when FROMDUAL is active, CPU data, memory usage, and more. Any clue to solve this? Thank you

Taxonomy upgrade extras:

Zabbix_agent unreachable after installing FromDual

Hello, i installed FromDual on a MySQL 5.6 and made it work fine sending data. But now every 5 minutes i get the message of the Zabbix_agent unreachable in that server. Agent is working fine and network too. I get this error on Zabbix log: 2916:20140618:125301.324 Zabbix agent item “FromDual.MySQL.check” on host “HOST” failed: first network error, wait for 5 seconds 2931:20140618:125309.359 Zabbix agent item “FromDual.MySQL.check” on host “HOST” failed: another network error, wait for 5 seconds 2931:20140618:125314.491 resuming Zabbix agent checks on host “HOST”: connection restored Anyone can help me solving this? Thank you

Taxonomy upgrade extras:

MySQL Environment MyEnv 1.0.5 has been released

FromDual has the pleasure to announce the release of the new version 1.0.5 of its popular MySQL, MariaDB and Percona Server multi-instance environment MyEnv.

The majority of improvements happened on the MySQL Backup Manager (mysql_bman) utility.

You can download MyEnv from here.

In the inconceivable case that you find a bug in MyEnv please report it to our Bugtracker.

Any feedback, statements and testimonials are welcome as well! Please send them to feedback@fromdual.com.

Upgrade from 1.0.x to 1.0.5

# cd ${HOME}/product

# tar xf /download/myenv-1.0.5.tar.gz

# rm -f myenv

# ln -s myenv-1.0.5 myenv

Upgrade from 1.0.2 or older to 1.0.3 or newer

If you are using plug-ins for showMyEnvStatus create all the links in the new directory structure:

cd ${HOME}/product/myenv

ln -s ../../utl/oem_agent.php plg/showMyEnvStatus/

Exchange the MyEnv section in ~/.bash_profile (make a backup of this file first?) by the following new one:

# BEGIN MyEnv

# Written by the MyEnv installMyEnv.php script.

. /etc/myenv/MYENV_BASE …Taxonomy upgrade extras: myenv, operation, mysql operations, multi instance, consolidation, release,

GTID In Action

In a previous post I was talking about How to Setup MySQL Replication using the classic method (based on binary logs information). In this article I’ll go through the transaction-based replication implementation using GTID in different scenarios.

The following topics will be covered in this blog:

- What is the concept of GTID protocol?

- GTID Replication Implementation

- Migration from classic replication to GTID replication

- GTID Benefits

What is the concept of GTID protocol?

GTID is a Global Transaction IDentifier which introduced in MySQL 5.6.5. It’s not only unique on the server it was originated but it’s unique among all servers in a replication setup.

GTID also guarantee consistency because once a transaction is committed on a server, any other transaction having the same GTID will be ignored, i.e. a committed transaction on a master will be …

Taxonomy upgrade extras: gtid, replication,

Deadlock mysql

Furthermore, I noticed more than 700 files created BY ZABBIX in /tmp on only masters. They are named like : DeadlockMessage.tmp.17145 & ForeignKeyMessage.tmp.11416

Taxonomy upgrade extras:

COMMIT!

Kalasha,

Since autocommit is disabled, did you issue “COMMIT” after inserting those records ? If not, then you have to either enable autocommit or commit your DML statements manually (issue “COMMIT” or execute DDL statement)

Taxonomy upgrade extras:

MYSQL TOTAL RECORD COUNT ISSUE

HI Team,

The issue is due to autocommit mode is set to off in MySQL and tranasaction level is repeatable read. Due to this we are not able to view the latest record count. It shows only the records committed when i logged in the mysql.

Pls suggest how to fix this

Taxonomy upgrade extras:

An example

4187:2014-06-03 16:06:51.934 - INFO: FromDual Performance Monitor for MySQL (0.9.3) run started. 4187:2014-06-03 16:06:51.934 - INFO: FromDualMySQLagent::setAgentLock 4187:2014-06-03 16:06:51.934 - INFO: Read configuration from /usr/local/mysql_performance_monitor/etc/FromDualMySQLagent.conf

4187:2014-06-03 16:06:53.350 - ERR : got TERM signal. Cleaning up stuff an exit (rc=1). 4187:2014-06-03 16:06:53.351 - INFO: FromDualMySQLagent::removeAgentLock 4187:2014-06-03 16:06:53.351 - INFO: FromDual Performance Monitor for MySQL run finshed (rc=1).

It’s less than 2 seconds. Sometimes, in our biggest database (150Gb), we have some slow queries ? Maybe it’s blocking the MPM ?

Taxonomy upgrade extras:

wrong number of rows

Can you repeat this at the command line? This is such a basic problem that I expect that it has nothing to do with MySQL but with your environment/application/set-up or testing method…

Taxonomy upgrade extras:

Check interval

An mpm agent run should not be started before the previous has ended. To make this sure we have some internal checks (and a kill). So the interval should be bigger than the duration of a (the longest) run. Typically we run mpm every 10 seconds and that is fine in most of the cases. But our biggest DB is around 250 Gbyte. So I am wondering why your runs take much longer. Should be visible by the timestamps of the log (debug) file where most of the time is spent.

Taxonomy upgrade extras:

MySQL Total Record count issue

Hi Team, I am using MySQL 5.6 in production server (hosted at AWS). After inserting records online its commited in database, but while executing select count(

*) from table_name i am getting the latest count. After exiting from mysql and again login i am able to view the latest count. Transaction isolation in mysql is repeatable-read (both in global and status variables) Kindly help to fix this issue

Taxonomy upgrade extras:

Enlarge update interval

Maybe by postponing the interval between two checks, it could be better ? I noticed it’s happening only on huge databases

Taxonomy upgrade extras:

TERM signal

As mentioned earlier (now with a nicer error message) somebody or something is killing the actual mpm agent job. This is typically a next mpm agent job who has to wait too long for the actual one. The question is more: Why is the first one lasting for so long…

Taxonomy upgrade extras:

Great !

Hi ! Looks like it’s working for the master module.

Unfortunately, I still have sometimes a lack of data and that new error in logs :

4096:2014-05-28 16:46:49.051 - ERR : got TERM signal. Cleaning up stuff an exit (rc=1).

Taxonomy upgrade extras:

bug in master module found and fixed

The following fixes in the file lib/FromDualMySQLmaster.pm should do the job:

- $$status_ref{'Binlog_file'} = '';

+ $$status_ref{'Binlog_file'} = 'none';

- $$status_ref{'Binlog_do_filter'} = '';

- $$status_ref{'Binlog_ignore_filter'} = '';

+ $$status_ref{'Binlog_do_filter'} = 'none';

+ $$status_ref{'Binlog_ignore_filter'} = 'none';

- $$status_ref{'Binlog_do_filter'} = $ref->{'Binlog_Do_DB'};

- $$status_ref{'Binlog_ignore_filter'} = $ref->{'Binlog_Ignore_DB'};

+ $$status_ref{'Binlog_do_filter'} = $ref->{'Binlog_Do_DB'} eq '' ? "''" : $ref->{'Binlog_Do_DB'};

+ $$status_ref{'Binlog_ignore_filter'} = $ref->{'Binlog_Ignore_DB'} eq '' ? "''" : $ref->{'Binlog_Ignore_DB'};I hope we will have a new release soon...

By the way I would say that this bug was introduced in v0.9.1 already, more than 13 months ago... :-(

Taxonomy upgrade extras: